How binocular vision is shaped by early visual experience

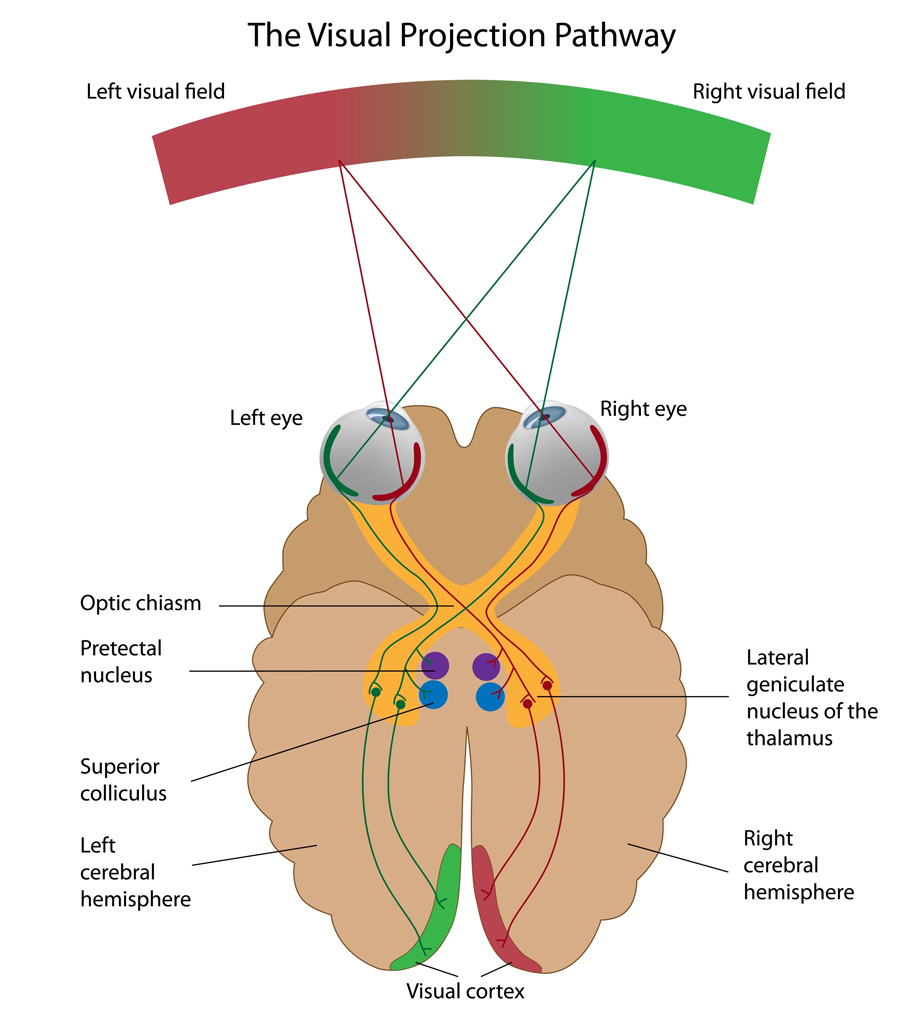

The world that surrounds us is made of an infinity of shapes and colours that we perceive through our eyes. The light that reflects from each and every object in our environment falls onto the retina, a thin layer of tissue that lines the back of our eyes. From there, the visual information is sent to the brain, and more specifically to the visual cortex, where patterns of light are transformed into the vivid sensory experience that we call sight.

The visual cortex receives information from numerous neurons, each of which responds selectively to a specific feature in the visual scene. For example, edges and their orientation in space carry an enormous amount of information about the visual environment and each neuron responds only to a narrow range of edge orientations: horizontal edges activate some neurons, vertical edges activate other neurons and still other neurons respond to different orientations in between. The visual cortex gathers all the incoming information and, depending on which neurons are activated, reconstitutes visual representations of the surrounding environment.

Two eyes, one picture

We look at the world with two eyes but we see only one picture; what both our eyes perceive is unified into a single representation. This constitutes one of the challenges in representing visual information: neurons in the visual cortex have to bring together the signals that come from the left and right eyes to create a single unified binocular representation. The association of the inputs from the two eyes is achieved with a high degree of precision. It is so precise that a neuron that responds to a certain orientation when the left eye is stimulated will respond selectively to the same orientation when the right eye is stimulated.

What has been missing is a clear understanding of the developmental mechanisms that are responsible for uniting the inputs from the two eyes, a gap in knowledge that Dr David Fitzpatrick, Dr Jeremy Chang and Dr David Whitney at the Max Planck Florida Institute for Neuroscience aimed to fill by conducting a series of experiments.

We look at the world with two eyes but we see only one picture; what both our eyes perceive is unified into

a single representation.

Early visual experience and alignment

The first question that the team of researchers aim to address concerns the role of visual experience in the development of vision: is visual experience required for the inputs from the two eyes to be aligned, or does the brain innately know how to create a single unified binocular representation?

The team has approached this question in the ferret. Ferrets have a well-organised visual cortex with a modular structure. A modular system is subdivided into repeated units that operate independently; in a modular visual cortex, different neurons have different functions. Neurons are not randomly positioned: nearby neurons have similar orientation preferences – for example, neurons that respond to horizontal edges are spatially close to neurons that respond to almost horizontal edges.

Dr Fitzpatrick and his collaborators use calcium imaging to visualise neuronal activity: when neurons are activated, calcium levels locally fluctuate. These fluctuations can be detected by combining the use of fluorescent calcium sensors and imaging techniques. So-called activity patterns can then be visualised, giving information about the localisation of active neurons.

More specifically, the researchers used calcium imaging to visualise the different patterns of activity that are associated with different orientations prior to the onset of visual experience: by visually stimulating the two eyes separately, they observed that, like in the mature visual cortex, different neurons responded to different orientations, and nearby neurons had similar orientation preferences. However, patterns of activity produced by stimulation of the left eye with a single orientation were different from the patterns produced by the same stimulus orientation presented to the right eye. This suggests that aligned binocular representations develop in a two-step process. First, in the absence of visual experience, the brain develops orderly network representations of edge orientations (with neurons selectively responding to specific orientations and nearby neurons having similar orientation preferences). Subsequently, after eye opening these network representations reorganise to give rise to aligned network representations that are seen in mature animals.

With additional experiments, Dr Fitzpatrick and his team confirmed that alignment of the inputs from the two eyes requires visual experience. The first week after eye opening constitutes a critical period for cortical development: over a short period of time, visual experience drives the alignment of monocular network representations. They conclude that early visual experience is critical for proper development of the networks that support binocular vision for the rest of life.

A third representation

The second question the researchers wanted to address is: what would patterns of activity in the visual cortex look like for simultaneous stimulation of the two eyes early in development, before alignment has been achieved? They used the same techniques as described above but, instead of stimulating both eyes separately (monocular stimulation), they stimulated them simultaneously (binocular stimulation). While they had showed that monocular stimulation led to two different representations for the left and the right eyes, they found that binocular stimulation led to the appearance of a third distinct representation.

By tracking these three representations across time, they discovered that the early binocular representation was more stable than monocular representations, appearing most similar to the mature, unified representation that emerges with visual experience. The researchers suggest that early binocular visual experience activates this early binocular representation and, by doing so, guides the reorganisation process that results in the alignment of all three representations as a single coherent network.

Changes in neurons’ preferred orientation

From the knowledge that early visual experience guides the cortical reorganisation process arises a third question: what happens at the cellular scale? The researchers hypothesised that changes in network structure happening during the reorganisation process must reflect changes in the response properties of single neurons.

To answer this question, they used two-photon imaging. Combined with fluorescent calcium sensors, this imaging technique allowed them to visualise the activity of individual neurons. Consistent with the previous observations made at the network scale, individual neurons’ orientation preferences prior to the onset of visual experience were different for the left and the right eyes. This was rectified during the reorganisation process as visual experience induced changes in preferred orientation: once network representations are aligned, an individual neuron that responds to a specific orientation when the left eye is stimulated will respond to the same orientation when the right eye is stimulated.

In their next studies, Dr Fitzpatrick and his team are now planning to investigate network reorganisation at the synaptic scale. Their aim will be to identify precisely the components of the cortical network that are changing and the mechanisms that enable this change.

This knowledge is critical for addressing visual disorders that arise from early abnormalities in visual experience.

Impact of the research

The research conducted at the Max Planck Florida Institute for Neuroscience will provide a greater understanding of the mechanisms responsible for the experience-dependent alignment of cortical networks.

This knowledge is critical for addressing visual disorders that arise from early abnormalities in visual experience, such as amblyopia. This condition, also called “lazy eye”, happens when one eye no longer shares visual experience with the other and is thus unable to build a strong link to the brain, which results in poor vision for the affected eye and more reliance on the “good” eye.

Besides, experience-guided alignment of cortical networks is likely critical not only for vision but for a broad range of other brain functions – sensory, motor and cognitive – that are optimised for an effective navigation and interaction with our world. Identifying those aspects of brain circuitry that depend on early experience for proper alignment and understanding the underlying alignment mechanisms could offer insights into a host of neurodevelopmental disorders whose causes are still largely unknown.

Personal Response

What inspired you to conduct this research?