Dealing with diminishing returns in quantum perturbations

For many physicists, there is an ever-present desire to explain the basic building blocks of the universe in the most fundamental way possible. Currently, the most widely accepted way of doing this is a theoretical framework named ‘quantum field theory’ (QFT). Instead of being tangible spheres in the way we usually think of them, this mathematical toolset considers subatomic particles to be excited states of underlying quantum fields. This idea eventually gave rise to the Standard Model: the theory which now forms the very basis of particle physics.

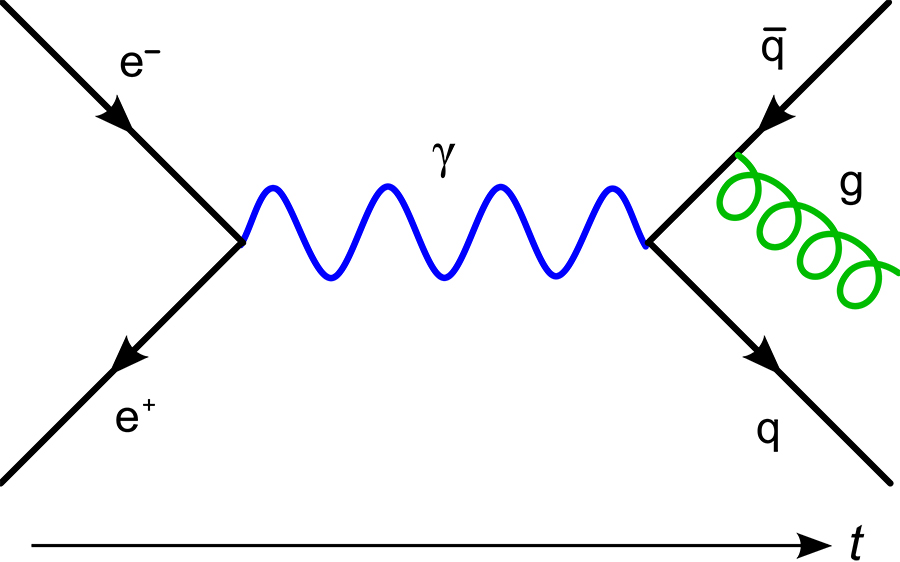

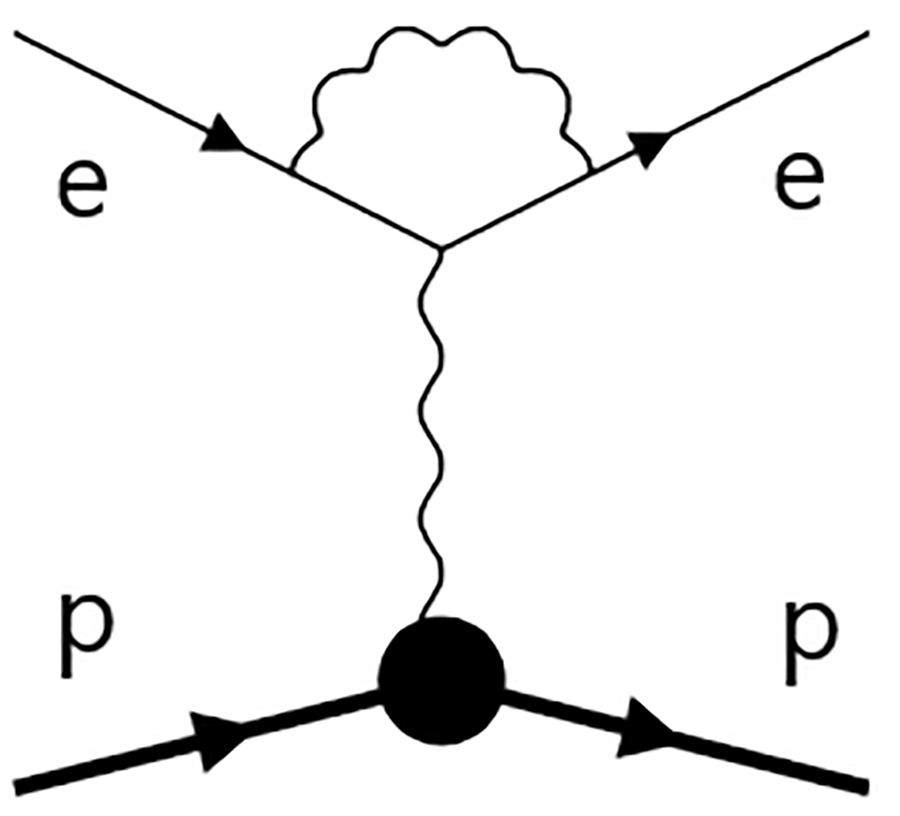

In 1948, celebrated physicist Richard Feynman devised an ingenious way to visualise the deeply complex mathematics involved in QFT. In his now famous diagrams, the paths of particles are depicted as lines, which either end or change course when they interact with other particles. For example, if two particles with opposite charges meet, they will repel each other – but they must do so in a way that conserves their overall momenta. According to QFT, they do this by exchanging momentum via a photon, depicted in a Feynman diagram as a line connecting the lines that represent each particle, which sharply change course at their respective points of connection. In reality, however, many of the processes studied by physicists are inconceivably more complex than simple two-particle interactions.

Calculating higher orders

Despite its many advantages, QFT does not represent a complete understanding of subatomic particles and their interactions. Beyond a certain degree of complexity, it is impossible for physicists to perfectly describe quantum systems using QFT in its current form. Instead, they rely on a mathematical toolset named ‘perturbation theory’. The calculations involved in this theory begin from simpler quantum systems, which physicists can still describe mathematically. Then, they tweak these equations to represent the properties of more complex systems more closely. Although perturbation theory can never allow for a perfect solution, it is still accurate enough to approximate physical processes in ways that physicists can incorporate into many of their theories.

In order to describe quantum systems, physicists rely on a mathematical toolset named ‘perturbation theory’.

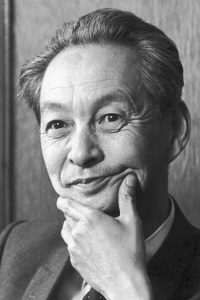

In the 1950s, Feynman, alongside pioneering Japanese physicist Shin’ichirō Tomonaga, illustrated how perturbation theory can be expressed in terms of Feynman diagrams. They showed that mathematical perturbations can be represented by closed ‘loops’ in which the paths of some particles do not connect with those either entering or leaving the process. Ultimately, these lines represent short-lived ‘virtual’ particles – which cannot be observed directly but are nonetheless instrumental in the particle interactions we observe. However, for even more complex systems, just one perturbation is not enough to accurately approximate the physics involved. To compensate, higher ‘orders’ of perturbation are needed, increasing mathematical complexity even further.

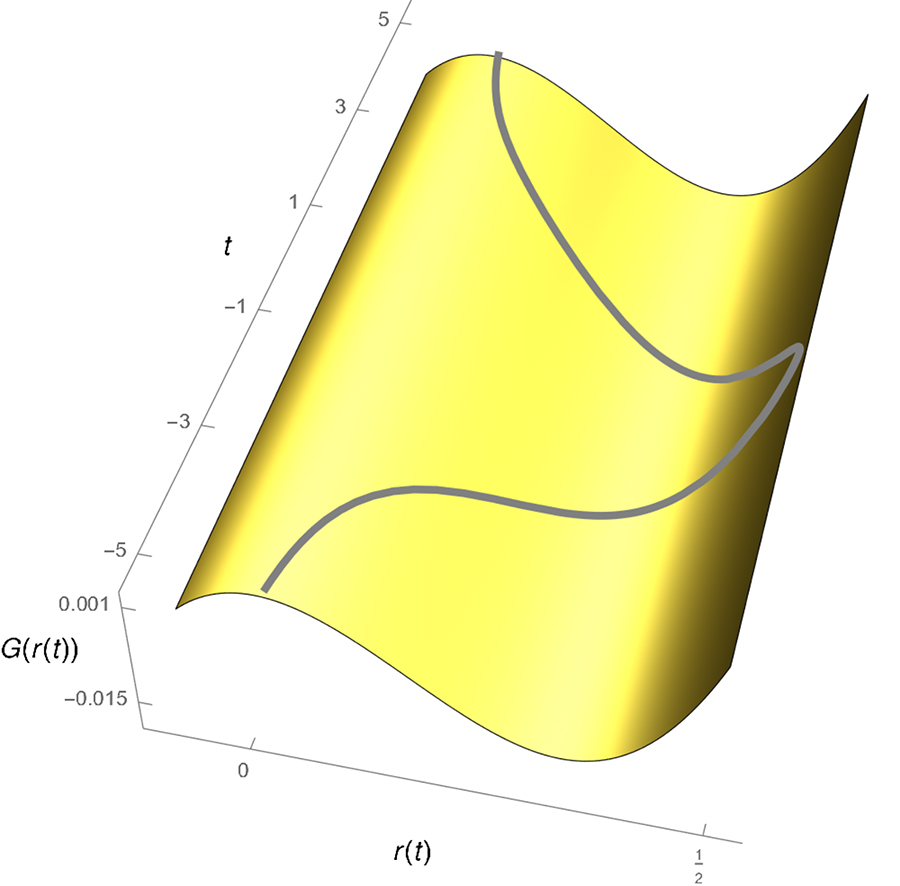

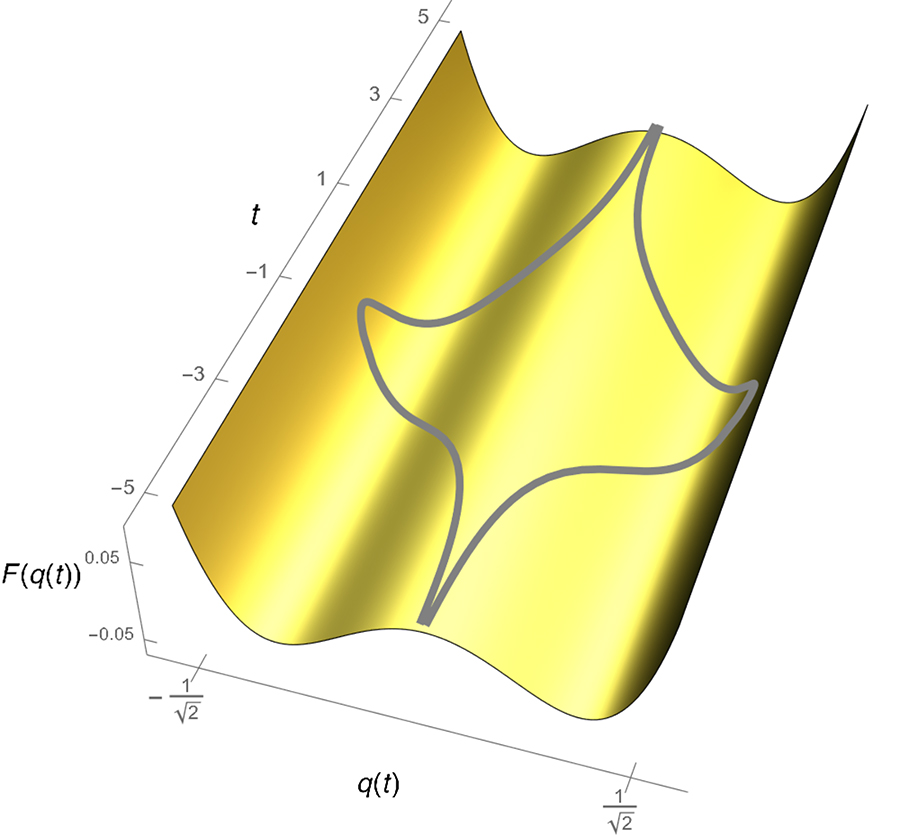

Euclidean action, defined as the time integral of the sum of the kinetic and potential energy.

Diminishing returns

As theoretical physicists explore ever more complex processes, they are forced into calculating increasingly higher orders of perturbation. In Feynman diagrams, these higher orders appear as ‘loops within loops,’ where some paths are connected to others which themselves are not directly connected to those entering or leaving the process. For researchers, this poses a serious challenge: as orders become successively higher, the subsequent improvements to the accuracy of their predictions become smaller and smaller. Ultimately, this leads to a law of diminishing returns – often experienced by engineers, as ever finer adjustments to their designs lead to ever more subtle improvements.

In addition to this problem, the computing power required to make these calculations increases significantly for each perturbation – stretching the algorithms currently available to their limits. To date, the most ambitious studies have only managed to calculate as far as six- and even seven-loop orders – achieved by physicist Bernie Nickel in the 1970s. Beyond this, even the most sophisticated algorithms available today reach their theoretical thresholds and couldn’t possibly be pushed any further. Until recently, these limits placed serious doubts on whether more accurate approximations of more complex quantum processes would ever be possible.

Evaluating infinite loops

The joint U.S. American-French-Italian team involving Ludovico Giorgini from Stockholm, Ulrich Jentschura from Missouri, Jean Zinn-Justin from Paris and Giorgio Parisi, Enrico Malatesta and Tommaso Rizzo from Rome, have approached the problem from a different angle. In what may seem to be an impossibly tall order at first, the team considered the practically impossible scenario where an infinite number of perturbations are carried out on a system – represented by Feynman diagrams with infinite numbers of loops within loops. At this point, any further perturbation orders would result in precisely no improvements in accuracy at all, meaning that the mathematics involved has diverged at the best approximation allowed by QFT in its current form.

instanton trajectories, due to the parity symmetry of the potential.

Despite the theoretical impossibility of achieving such calculations, the team proposes that the concept in itself could be used to overcome the difficulties posed by high orders of perturbation. In their previous research, his team discussed the possibility of making educated guesses about how the mathematics of high perturbation orders may appear at their point of divergence. These ideas were centred on a basic aspect of QFT named ‘correlation functions’, which describe how the properties of quantum fields may vary at different positions, and how they can be analysed in terms of classical fields – such as electromagnetic fields. However, these earlier approaches presented further difficulties: both in terms of mathematics, and computing power.

The research team could make educated guesses about the mathematics of these higher orders, enabling them to precisely approximate how phase transitions would unfold within the system.

Renormalisation groups

Building on the successes of their earlier research (in part, work done by Jentschura and Zinn-Justin), the team next considered the alterations which would need to be made to a Feynman diagram with a large number of loops, when corrected using these correlation functions. In their latest research, they showed how the resulting quantities can be conveyed as a mathematical construct named a ‘renormalisation group’. These groups allow any changes in a complex physical system to be investigated systematically at both microscopic and macroscopic scales. This approach is crucial in the determination of critical exponents of phase transitions.

Here, the team considered how correlation functions can be applied in a more simplified case than those they investigated previously, enabling them to better assess the consequences of their educated assumptions. To test their approach, they used it to analyse a simulated, one-dimensional system of quantum particles, which transitioned into different phases as the temperature was varied.

Such systems are highly complex to study due to the phenomenon of quantum entanglement, which creates intricate webs of cross-talk between the properties of each individual particle. In turn, they require high orders of perturbation to be accurately approximated mathematically. With the help of correlation functions, the research team could make educated guesses about the mathematics of these higher orders, in the form of renormalisation groups. This enabled them to precisely approximate how phase transitions would unfold within the system.

Skipping tedious calculations

This approach entirely circumvents the need to calculate solutions to every individual order of perturbation required to approximate mathematical solutions using QFT. Instead, it allows researchers to jump straight to the mathematics required for approximating high orders, at just a small fraction of the computing power in conventional perturbation theory. If their ideas become widely accepted, the team hopes that they could bring about significant advances in many branches of theoretical physics, which must work around limits in computing power as they make their calculations.

Through analysis of renormalisation groups, the team predicts that future studies could gain unprecedented insights into the properties of highly complex systems, including large groups of entangled quantum particles. Ultimately, they conclude that the predictive limits of perturbation theory can be overcome through an improved understanding of the fundamental aspects of QFT. As researchers continue to prove the fundamental properties of our universe in ever finer detail, this knowledge could soon be advanced further still.

Personal Response

What inspired you to conduct this research?

What kinds of system could be better studied using your approach?