A new frontier for hate

Extremism is as old as civilisation, but those advocating hate can now spread their ideas more widely using the Internet. Billions of users now take part in a global flow of information and many nations, such as China, now try to restrict this potent flow as they see it as a political or cultural rival to orthodox power structures.

Over the last seven years, James Hawdon and his colleagues have examined online hate speech across seven countries that have high levels of Internet access and varying, but mostly low, levels of government restrictions, ranging from the almost absolute freedom of speech entrenched in US law, to countries with more robust anti-hate speech laws such as Germany and the UK.

During this time, the research group has documented the increase in online hate that attacks groups based on their race, ethnicity, nationality, sexual orientation, religion, or some other group characteristic. This is a form of cyberviolence that unlike bullying or stalking – is not aimed at an individual nor has it a specific victim, and “these materials, which include text, videos, photos, games, and other modes of communication, express hatred or degrading attitudes toward a collective”.

There have been many studies that confirm the negative impact of hate materials, and as one of Hawdon’s collaborative works states, “This kind of hate speech has many detrimental effects … exposure to hate materials correlates with a range of negative behavioural and attitudinal outcomes, such as decreased social trust, intergenerational transmission of extremist ideology, reinforced discrimination against targeted groups, and fear and anger among members of a targeted group. Exposure to extremist materials has also been linked to acts of violence.”

The most extreme example of this would be the rise of ISIS, and their use of the Internet and social media to radicalise and recruit members and plan violent actions internationally. Up until recently, the majority of research into this topic has been conducted in English-speaking countries; however, more recent studies are widening their scope and have included Finland, France, Germany, Poland, and Spain. Clearly the scope of research needs to be wider still given the increasing global nature of hate groups, and the possibility of other pan-national hate movements similar to ISIS rising up.

Online [right-wing] hate producers tend to adopt an anti-cosmopolitan worldview that sees their nation and culture as being under attack by immigration and multiculturalism.

Who is making it?

There have been many studies by Dr Hawdon and others into who creates online hate, what leads people to see it, and what can be done to fight it. Organised hate groups have had an ever-increasing presence on the Internet since its inception, but most online hate is created by individuals maintaining websites or commenting on social media, and the numbers of people creating it has increased. For example, in one study in 2013, 7% of Americans admitted to producing online hate; in 2018, 21% admitted to doing so.

While there are many types of extremism, right-wing extremism currently dominates the Internet, or certainly the English-speaking part of it, and online hate producers tend to adopt an anti-cosmopolitan worldview that sees their nation and culture as being under attack by immigration and multiculturalism. It also has misogynistic and anti-feminist tendencies, and it’s no surprise that males produce more of this kind of hate materials than females.

There exist feelings of collective victimisation among members of these groups too, and those who feel a strong attachment to these online communities of like-minded individuals are also more likely to produce hate materials. Another factor is that there seems to be a correlation between these producers and self-control, as low self-control is an indicator of an inability to foresee the negative impacts on, and an insensitivity to, the consequences their behaviour could have to themselves or the target of their hate.

How has it spread?

Various studies demonstrate the processes of ‘flocking’ and ‘feathering’. People adopting an anti-cosmopolitan worldview ‘flock’ together, and once they do, ‘feathering’ takes place where they learn and adopt the attitudes of other group members, says Dr Hawdon. This process can be amplified online as people join ‘filter bubbles’ that increase their contacts with likeminded people while limiting their exposure to alternative perspectives. It can reinforce negative worldviews that people then become comfortable spreading, the research group found out. As the number of sites spreading hate has grown, so has the number of people seeing it, and the hate groups are aware of this: “According to one German right-wing extremist recruiter, the Internet is ‘the easiest way to make contacts and to take over and coordinate responsibilities, to gain reputation and to advance’.”

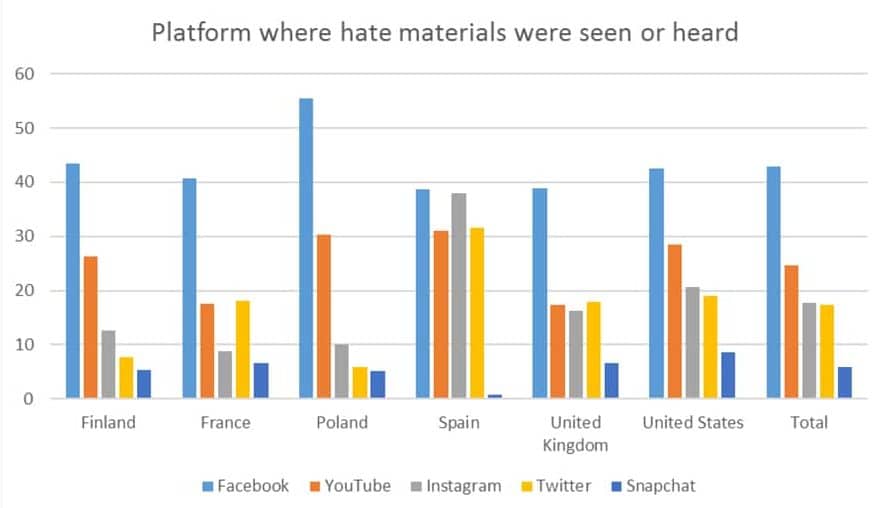

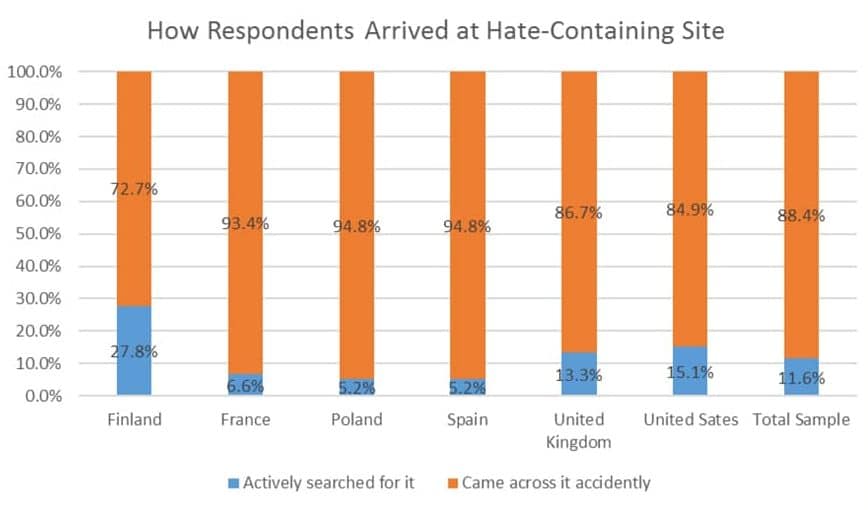

The situation is not helped by the algorithms that sites such as YouTube, Reddit and Facebook use, as these ‘recommend’ things that users might like and can lead them to hate material. Once hate-themed materials are consumed, they are also more likely to be recommended again. They may also make the individual more likely to find a community and tighten their ‘filter bubble’.

Users can also be exposed to materials through links on sites which focus on ‘alternative’ topics, many of which are anti-government, or highly-libertarian websites, such as 4Chan, which not only attract younger visitors, but also host a wealth of extremist (and anonymously posted) content. There is also evidence that younger people are more likely to seek out hate materials if they are forbidden by their parents or guardians, possibly as a form of rebellion.

Perhaps this online social control can convince extremists that, somewhat ironically, a tolerant society does not tolerate extremist ideologies.

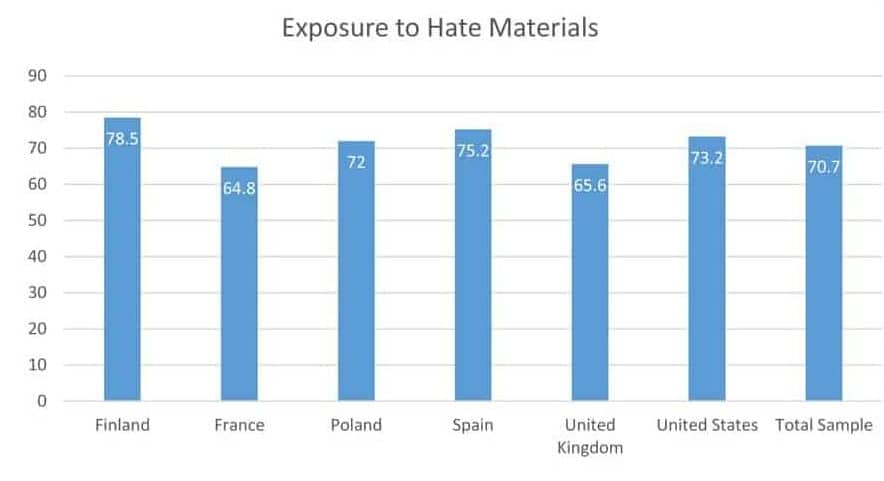

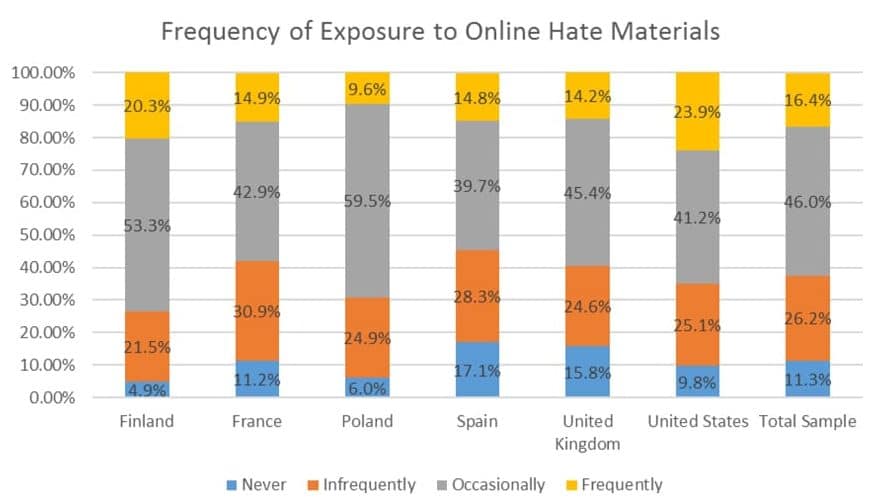

One study showed that the percentage of Americans aged 18 to 25 who saw online hate increased from 53.9% in 2013 to 73.2% in 2018 and they found that people’s online routines – spending time online, using multiple social networking sites, adding strangers to one’s social media networks, expressing controversial opinions online or having an anti-cosmopolitan worldview – all increase the chances of seeing online hate.

What can be done about it?

The most recent cross-national studies suggest that enforcing anti-hate speech laws may limit exposure to hate materials and there have also been steps taken by anti-hate groups, social media and tech firms, as Dr Hawdon explains: “The Counter Extremism Project, for example, uses social media and technological tools to identify extremist threats and directly counter extremist ideology and recruitment. Similarly, Google’s European division has announced plans for improving its ability to interrupt this process by using better machine learning to identify extremist videos, employing independent human flaggers to detect content that promotes hate activity, making extremist videos more difficult to find, and redirecting searches for radical content through anti-terrorism videos.”

However, freedom of speech protections can limit the effectiveness of laws as websites on a global network can operate out of countries with the strongest protections, such as the USA. Social networking platforms may try to fight hate by removing messages, but the sheer number of messages on these platforms makes doing this difficult. We also see people use ‘informal social control’ online where people use peer group pressure and will argue with posters or try to create a culture of anti-hate.

In Dr Hawdon’s studies, over two-thirds of respondents reported that they tell people expressing hate online to stop or defend the attacked group. He suggests that perhaps this online social control can convince extremists that, somewhat ironically, a tolerant society does not tolerate extremist ideologies. This may create a more tolerant virtual world, and, with luck, disrupt the radicalisation of the next perpetrator of hate-based violence.

However, given that most people are becoming more entrenched in their ‘filter bubbles’, and increasingly ‘flock’ to those with a shared worldview, this becomes more and more difficult over time. It’s clear, therefore, that more research needs to be done, and not just in the Western world and among young people, but across the globe and every age group.

Personal Response

What sparked your interest in cyberviolence?